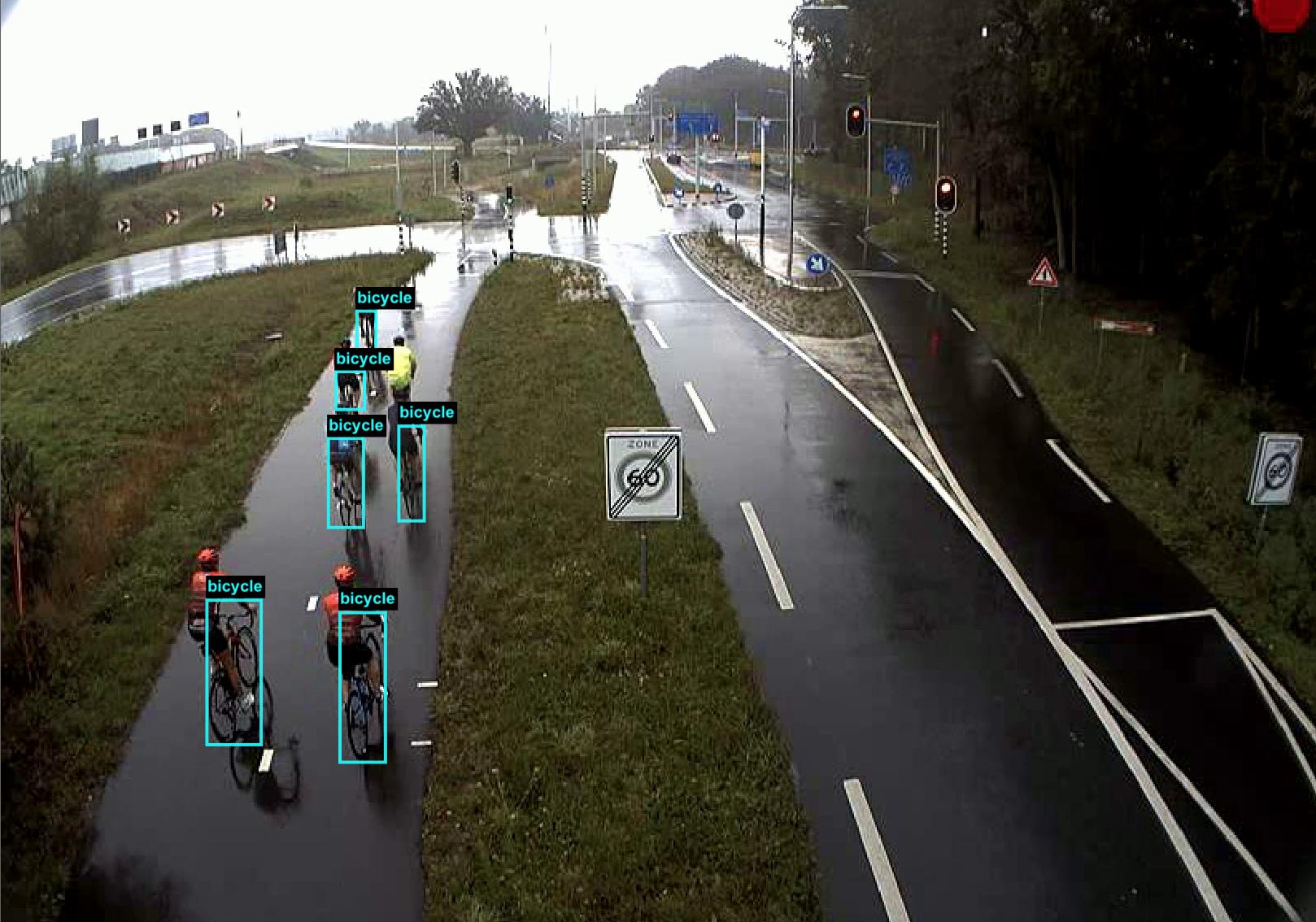

Traffic analysis is currently a laborious task performed manually by human experts. Object detection models could

potentially speed up this process significantly. This research explores which object detection models are most suitable for detecting vulnerable road users, such as bicycles, scooters, and pedestrians, in traffic camera footage. In this work, we compared Faster-RCNN, YOLOv5, an ensemble of YOLOv5 models, and a YOLOv5 model trained on the ensemble’s output (surrogate model) to see which approach resulted in the best accuracy. The models were trained and validated on the Specialized Cyclist, Berkley Deep Drive 100K, and the MB10000 datasets. Additionally, we created a custom dataset used for fine-tuning and testing. This dataset contains images from a traffic intersection in the province of Utrecht.

Three experiments were conducted to examine which object detection models are most suitable for traffic analysis. Experiment 1 compared Faster-RCNN to YOLOv5s and YOLOv5m to assess the most accurate model. In experiment 2, we analyzed the differences between using and not using pre-trained weights before training. Finally, in experiment 3, a fine-tuned YOLOv5 model was compared to the fine-tuned ensemble and surrogate models. Faster-RCNN had the best accuracy in the first experiment with 0.88 mAP, 0.81 F1-score, 0.77 precision, and 0.85 recall. Experiment 2 demonstrated that pre-training on the external datasets resulted in better scooter and pedestrian detection accuracy but worse performance for cyclist detection. Finally, the third experiment revealed that a fine-tuned model pre-trained on the combined external datasets was more accurate than the ensemble and surrogate models. We concluded that Faster-RCNN and YOLOv5 trained only on our dataset could detect vulnerable road users with high accuracy. Furthermore, it was not beneficial to use ensemble and surrogate models over a model trained on combined external datasets in our case.